Cinema Before Theory

Sometimes in the Dark and the Origin of an Optical Question (this post is the second part from my previous one hosted here and on patreon (www.patreon.com/IAMCCS) I didn’t start thinking about optical imperfection while writing a paper. I started thinking about it when the image began to resist me. Sometimes in the Dark (check […]

Coming soon Paper following up concept LoRA

We’re proud to present You the excerpt of our paper following up the concept LoRA “Anamorph1x”, a LoRA based on Z-IMAGE model simulating the anamorphic cinematic style. Here the excerpt: “Contemporary generative image systems increasingly equate visual quality with total visibility: ultra-high resolution, uniform sharpness, noise suppression, and spatial coherence. In parallel, streaming distribution has […]

🎥 NEW WORKFLOWS — Qwen-VL Face Swap + Enhanced Qwen Edit simple v.2 (with LoRA Support for Low VRAM)

Hi friends, IAMCCS here — and today I’m releasing two new workflows for Qwen Image Edit 2509, both deeply integrated with the Prompt Enhancer and the upgraded IAMCCS_nodes tools. This post expands and updates my previous posts and pushes it into a fully working release with: ✓ A Face Swap Workflow (v1.0.1) ✓ A Qwen […]

UPDATE IAMCCS CUSTOM NODES! — New Prompt Enhancer v1.0.1 + Massive IAMCCS_nodes 1.3.0 Upgrade

Hi folks, this is CCS, and today we’ve got a double-upgrade drop — two huge updates to the tools powering your Qwen and WAN workflows: ➜ IAMCCS_QE_PROMPT_ENHANCER v1.0.1 👉 https://github.com/IAMCCS/IAMCCS_QE_PROMPT_ENHANCER ➜ IAMCCS_nodes v1.3.0 (major update) 👉 https://github.com/IAMCCS/IAMCCS_nodes This is the kind of update that doesn’t just “fix things”…It makes your entire pipeline smarter, quicker, and […]

LET’S TRY QWEN IMAGE EDIT 2509 WITH OUR NEW CUSTOM NODES!

Hi folks, this is IAMCCS, and today we’ll dive into the real thing — the acclaimed model from Alibaba, Qwen Image Edit 2509, working side-by-side with our new custom nodes: IAMCCS_QE_Prompt_Enhancer and my little baby, IAMCCS_annotate! We’ll use models like the Nunchaku version and the normal diffusers (with a deeper dive into the Nunchaku installation […]

Two brand-new CUSTOM NODES for ComfyUI — IAMCCS_annotate & IAMCCS_QE_Prompt_Enhancer!!

Hi folks, this is IAMCCS. I’ve been working on something special for you. Today I’m going to show you two brand-new utilities just released after the acclaimed IAMCCS_nodes pack. These tools aren’t ordinary nodes — they’re creative extensions for the way you think and work inside ComfyUI. As a thank you to my Patreon community, […]

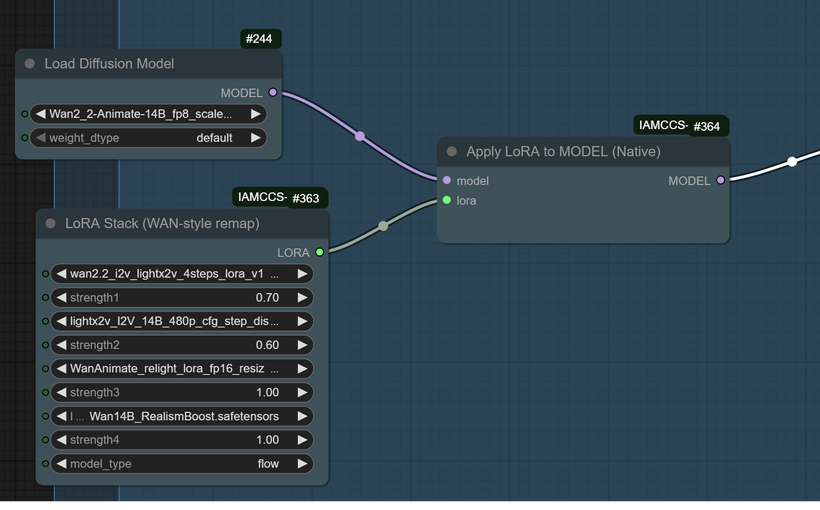

New! IAMCCS Native WAN 2.2 Animate Workflow – plus IAMCCS-node (Lora fix) and Long video!

Hi folks, this is CCS, and today I’m sharing a new native workflow for WAN 2.2 Animate — extended, refined, and made stable for everyone who’s been struggling with the “LoRA key not loaded” problem. Many of you have run into it: LoRAs simply refuse to load inside native WAN workflows.They only seemed to work […]

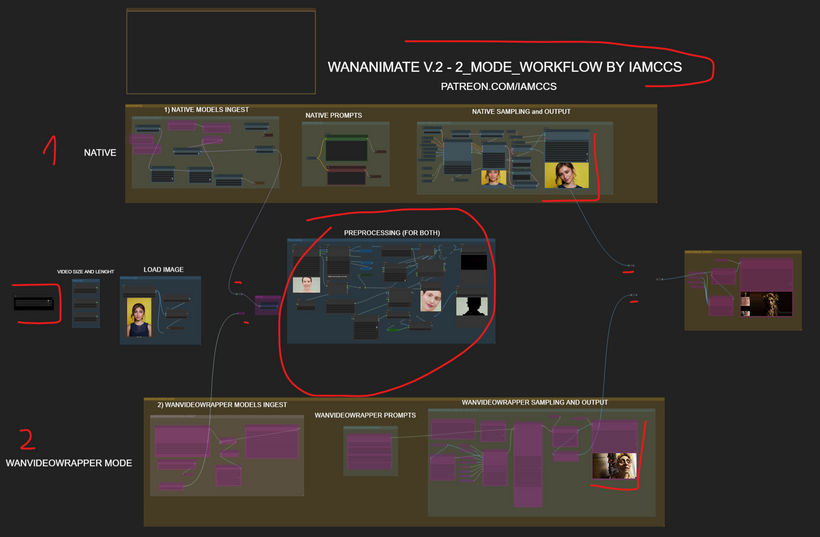

WANANIMATE v.2 is here! – (plus updated workflow v.2 for low vram users)

Hi folks, this is CCS — and as my elven friend Velgen Delois III already told you, Wanimate V2 is finally here — a real leap in motion realism and expressive depth. Let’s dive in. The motion feels way more natural, facial expressions finally sync with emotional tone, and the overall video flow is tighter […]

ComfUI: WAN2.2 video extended

The Extended Cinematic Vision: WAN 2.2 Workflow Breakdown Hi, this is CCS, today I want to give you a deep dive into my latest extended video generation workflow using the formidable WAN 2.2 model. This setup isn’t about generating a quick clip; it’s a systematic approach to crafting long-form, high-quality, and visually consistent cinematic sequences […]

WANANIMATE – BACKGROUND ADD ComfyUI workflow

Hi my friends. Today I’m presenting a cutting-edge ComfyUI workflow that addresses a frequent request from the community: adding a dynamic background to the final video output of a WanAnimate generation using the Phantom-Wan model. This setup is a potent demonstration of how modular tools like Comfy U I allow for complex, multi-stage creative processes. […]